The current market for generative media is saturated with “Swiss Army Knife” solutions. For creative operations leads, the challenge is no longer finding a tool that can generate an image; it is finding a system that survives the rigors of a high-volume production pipeline. Feature checklists—those ubiquitous tables comparing “Text-to-Image” or “Upscaling” capabilities—are increasingly useless. They fail to account for the operational friction that occurs when a creative team moves from a single prompt to a thousand-asset campaign.

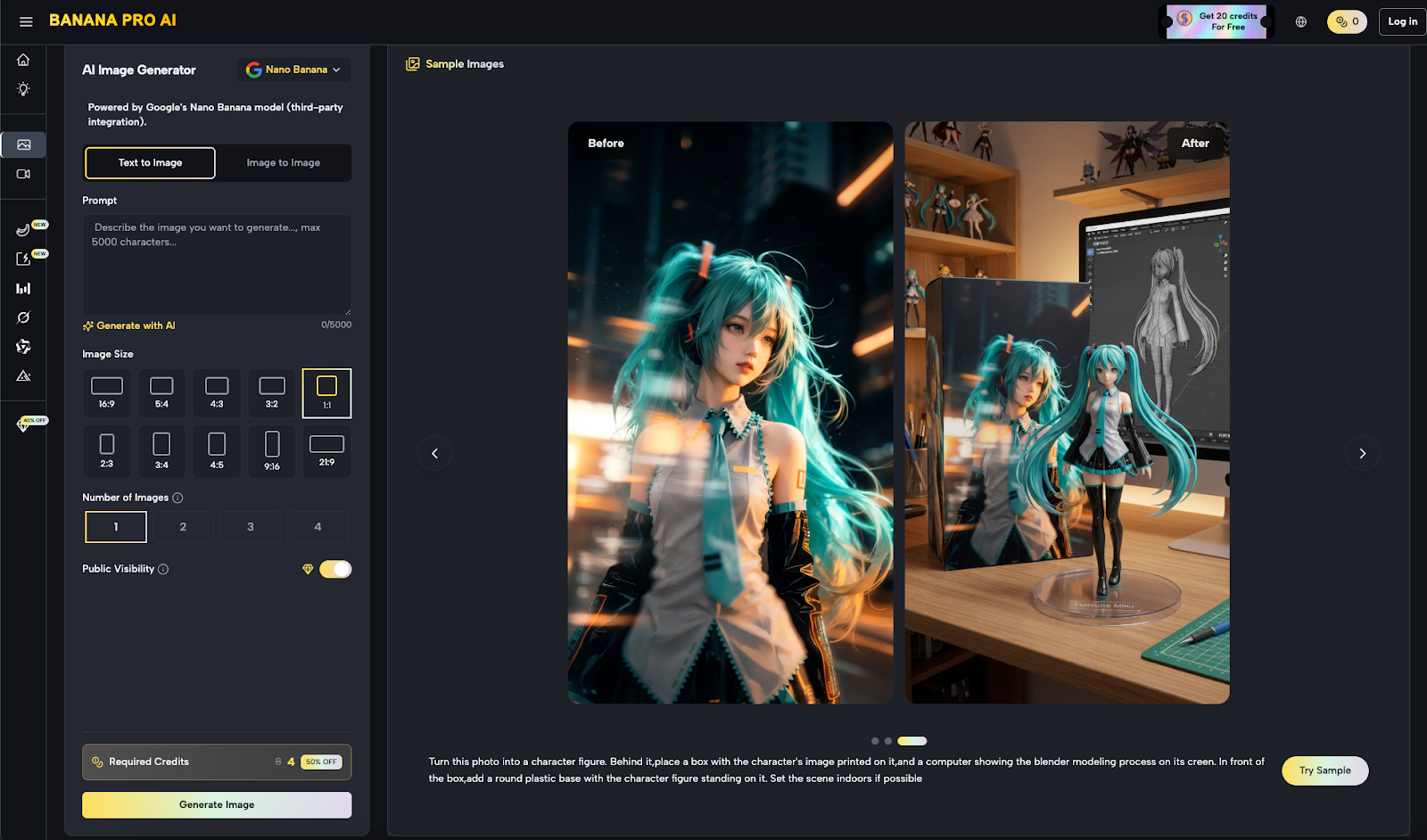

To evaluate platforms like Banana AI or more specialized interfaces, we must move toward a stress-test framework. This approach prioritizes throughput, consistency, and the “time-to-edit” rather than the initial “wow” factor of a single generation. When the goal is a repeatable asset pipeline, the infrastructure matters more than the novelty of the algorithm.

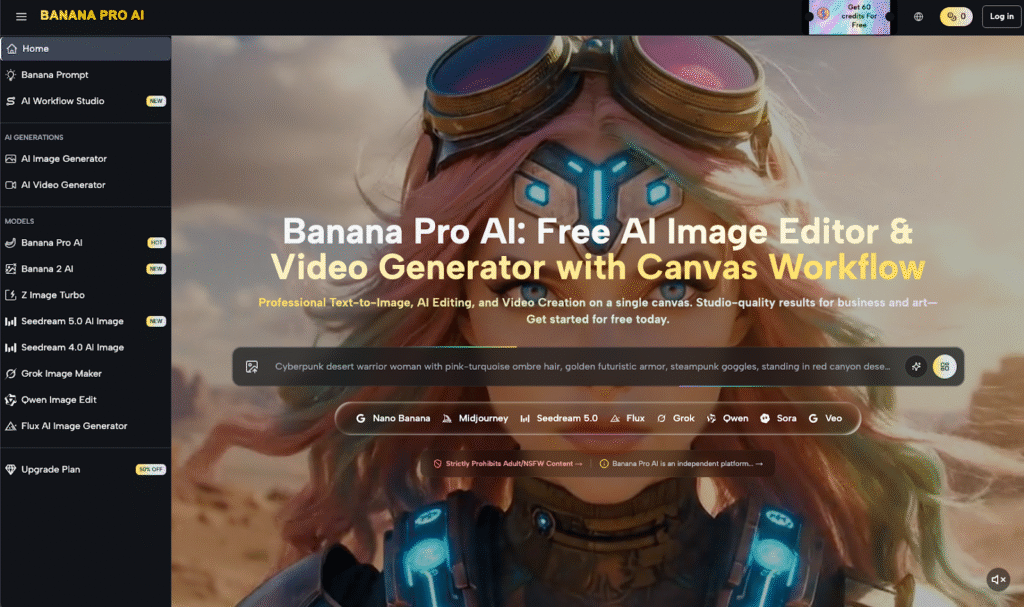

The Fallacy of the All-in-One Dashboard

Most procurement decisions in the AI space are driven by a broad feature set. A tool claims to be an AI Image Editor, a video generator, and a community hub all at once. However, for a lead building a production-ready workflow, these generalist labels mask the real question: How much manual labor is required to fix what the AI breaks?

A generic feature list will tell you that a platform supports image-to-image transformations. It won’t tell you if the model’s latent space is “sticky” enough to maintain a brand’s specific color palette across twenty iterations. In our evaluation of the Banana Pro ecosystem, we focus less on the presence of a feature and more on the degree of control the user retains over the output variables.

Stress Test 1: Style Coherence and Persistence

The first pillar of a professional evaluation is style coherence. In a marketing context, generating one beautiful image is easy; generating ten images that look like they belong to the same brand identity is notoriously difficult.

When testing tools, creative ops should move beyond “cool” prompts. Instead, use a “Seed-Lock Stress Test.” Generate an initial asset, then attempt to change one minor variable—lighting, perspective, or subject matter—while keeping the stylistic “DNA” intact. Many tools fail here because their underlying models drift too far with every new token added to a prompt.

Platforms that integrate a robust canvas workflow, such as the one found within the Banana Pro interface, allow for a more modular approach. By treating the generation as a layer rather than a final product, teams can mask, regenerate, and extend assets without losing the foundational composition. This moves the workflow away from “gambling with prompts” and toward traditional art direction.

Stress Test 2: Latency vs. Fidelity in High-Volume Drafting

Production speed is often hampered by a misunderstanding of model weight. Large-parameter models produce stunning results but carry significant inference costs and time delays. For the drafting phase of a project, where a team might need to cycle through a hundred concepts in an hour, high-fidelity models are often a bottleneck.

This is where the utility of smaller, more agile models comes into play. Within the current landscape, the use of a tool like Nano Banana Pro serves as a prime example of tiered production. Using a “micro-model” for rapid prototyping allows a team to establish composition and narrative flow before committing the compute resources required for high-resolution final renders.

It is a common mistake to use the most powerful model for every stage of production. An evidence-first approach shows that using a lightweight version like Nano Banana for the initial “sketching” phase can reduce the discovery cycle by up to 70%.

The Role of the Nano Banana in Rapid Iteration

When we look at the specific application of the Nano Banana model, we see its value in the “low-stakes” generation phase. This isn’t about creating a final billboard; it’s about seeing if a concept works in 1.5 seconds rather than 30.

However, we must acknowledge a critical limitation: micro-models often struggle with complex anatomy or intricate text rendering. In our testing, we found that while the Nano Banana is exceptional for layout and color blocking, it still requires a hand-off to a higher-fidelity model like Banana AI for the final polish. This hand-off process is the real stress test for any pipeline.

Stress Test 3: The “In-Painting” and Regional Control Audit

A professional AI Image Editor is defined by what it can change, not just what it can create. For creative leads, the “In-Painting” tool is the most scrutinized feature. The stress test here involves “Contextual Awareness.”

Can the tool recognize that a person added to a reflection-heavy environment needs to cast a corresponding shadow? Many generative tools operate in a vacuum, ignoring the existing pixels when a new element is introduced.

To evaluate this, we recommend a “Destructive Edit Test.” Take a high-quality asset, remove a critical central element, and ask the AI to fill it with something stylistically dissonant (e.g., a futuristic gadget in a Victorian setting). The tool’s ability to blend the lighting and texture of the dissonant object into the original frame determines its readiness for professional compositing.

Understanding the Infrastructure: Banana AI and Beyond

There is a significant difference between a wrapper and a workflow. Many tools are simply thin interfaces over existing open-source models. While there is nothing inherently wrong with this, it creates a dependency on external updates that can break a production pipeline overnight.

A more stable approach involves platforms that curate their model weights and offer a variety of specialized “engines.” For instance, Banana AI provides a baseline of reliability that is often missing from experimental, prompt-driven sites. When comparing tools, ask the provider about their model versioning. Can you “lock in” a specific version of a model for the duration of a six-month project, or will the “Banana Pro” engine you use today be replaced by a different iteration tomorrow?

Expectation Management: The Human-in-the-Loop Reality

One of the greatest uncertainties in the current generative media landscape is the “Prompt Sensitivity Gap.” Even with identical settings, two different users will achieve wildly different results based on their understanding of the latent space.

We must reset expectations: No generative tool currently exists that can replace a trained designer’s eye for composition and color theory. The tools simplify the execution, but the creative direction remains manual. We have found that even the most advanced workflows, including those involving Nano Banana Pro, require a significant human-in-the-loop presence to ensure brand compliance and aesthetic quality.

Furthermore, we cannot ignore the “Resolution Wall.” Most models generate at a native resolution that is insufficient for print or 4K video. While upscalers exist, they often introduce “hallucinations”—small, unwanted details that look like digital noise. A skeptical evaluator must look at the “raw” output before the upscaler hides the model’s flaws.

Building the Comparison Matrix

When you are ready to compare your options, do not use a spreadsheet of features. Use a matrix of “Unit Costs” and “Time to Final.”

The Efficiency Matrix

* Discovery Phase: How many variations can be produced in 10 minutes? This is where Nano Banana excels.

* Refinement Phase: How many clicks are required to change a specific asset’s color palette?

* Export Phase: Does the tool offer the necessary layers or alpha channels for post-production in software like After Effects or Photoshop?

If a tool like Banana Pro allows for a canvas-based workflow, it usually scores higher on the refinement phase because it bypasses the “re-prompting” loop that plagues chat-based interfaces.

The Limitation of Benchmarking

It is important to maintain a visible caution regarding any “benchmark” results. Generative AI is evolving at a pace that renders six-month-old data obsolete. What we measure as “state-of-the-art” today—whether it’s the speed of Banana AI or the precision of a specific AI Image Editor—is subject to the next leap in transformer architecture or diffusion techniques.

Additionally, internal hardware orchestration varies. A tool that performs perfectly during a Tuesday morning demo might experience significant latency during peak hours on a Friday. Any professional evaluation must include testing during various load times to ensure the “production-ready” claim holds up under global traffic.

Toward a Sustainable Creative Pipeline

The goal for any creative operations lead is to move away from the “magic” of AI and toward the “utility” of AI. This requires a shift in mindset: stop looking for the smartest model and start looking for the most controllable workflow.

Whether you are integrating Banana Pro into a small boutique agency or a large-scale marketing department, the metrics remain the same. Can the tool handle the “boring” parts of the job—resizing, masking, and style-matching—with 99% accuracy? If the answer is yes, then the feature list is secondary.

In conclusion, the decision to adopt a tool should be based on its performance in a “Stress-Test” environment that mimics your actual production demands. Don’t be swayed by the highlights on a landing page; instead, look for the friction points in the iteration loop. That is where the real cost of production lives.